Every day, civil servants and officials are confronted with many voluminous documents that need to be reviewed and applied according to the information requirements of a specific task. This is the case when making decisions, drafting legislation and policies, reviewing legislation and policies, assessing the impact of legislation and policies, carrying out various analyses, describing data sources and services, and many other tasks. To enhance the processing efficiency and comprehensive analysis of collections, the Ministry of Public Administration of Republic Slovenia, together with the University of Ljubljana, developed a Semantic Analyzer - an open-source smart engine using AI for text processing in Slovene language. The prototype enables easy and efficient algorithmic processing of large corpuses of documents and texts with finding content similarities using advanced grouping and visualisation. A web tool supporting natural language (like legislation, public tenders) is planned to be developed.

Innovation Summary

Innovation Overview

Every day, civil servants and officials are confronted with many voluminous documents that need to be reviewed and applied according to the information requirements of a specific task. This is the case when making decisions, drafting legislation and policies, reviewing legislation and policies, assessing the impact of legislation and policies, carrying out various analyses, describing data sources and services, and many other tasks. Since reviewing many documents and selecting the most relevant ones is a time-consuming task, we have developed an AI-based approach for the content-based review of large collections of texts. The approach of semantic analysis of texts and the comparison of content relatedness between individual texts in a collection allows for timesaving and the comprehensive analysis of collections. The goal is to develop a general-purpose tool for analysing sets of textual documents. The project aims to select and implement semantic analysis building blocks that can be used to perform arbitrary types of document analyses and prototype analytical workflows that could support the tasks and decision-making in public administration.

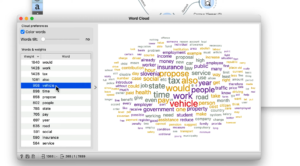

The building blocks we have developed include components to access data repositories, embed documents in vector spaces, search for similar documents, visualise document maps, search for characteristic terms, and rank documents according to their semantic similarity to selected terms. The semantic analyser scans the texts in a collection and extracts characteristic concepts from them. Depending on which concepts appear in several texts at the same time, it reveals the relatedness between them and, according to this criterion, determines groups and classifies the texts among them. The characteristic concepts of each group can be used to give a quick overview of the content covered in each collection. A graphical representation shows which group a text belongs to and thus allows you to find texts that deal with related topics. Alternatively, we can use a set of terms to describe the content we are looking for and find texts with these terms, as well as with terms that we have not mentioned but are close in content (e.g., synonyms, sub-names, super-names). The characteristic terms become a link between the documents, revealing interdependencies and/or contextual links between documents in one or more different text collections (e.g., finding the most relevant laws for a selected proposal for a measure from a collection of proposals for measures).

Beside Slovenian language it is planned to be possible to use also with other languages and it is an open-source tool. Semantic Analyzer enables quickly assembling workflows combining efficient algorithmic processing and visualisations of the large corpus of textual documents to find document groups, characterize them with keywords, perform semantic searching, or perform predictive machine learning and classification.

Innovation Description

What Makes Your Project Innovative?

The Semantic Analyzer is using emergent AI technologies to find semantic relations among texts and could be used to search through, among others, large legal documents quickly and easily for different concepts, phrases, as a large collection of texts can be sorted or grouped according to their content. Semantic Analyzer is an open-source tool that combines interactive visualisations and machine learning to support users in fast prototyping the semantic analysis of a large collection of textual documents. The principal innovation of the Semantic Analyzer lies in the combination of interactive visualisations, visual programming approach, and advanced tools for text modelling. The target audience of the tool are data owners and problem domain experts from public administration.

What is the current status of your innovation?

The tool opens up the possibility to search several sources at the same time, to find links, measures with similar contents and the legal basis for the corresponding proposals such as the governmental portal “Stop Bureaucracy” where citizens and entrepreneurs send their proposals for improvements (https://www.stopbirokraciji.gov.si/en/home). The tool is also used for examination of governmental database of the interactive website “Proposals to Government” (https://predlagam.vladi.si/) which contains suggestions from individuals to the government to solve a particular problem they have perceived. It contains over 12.800 proposals and has more than 36.500 users. The tool is also useful for searching in the governmental database “Single document -a uniform set of measures for a better regulatory and business environment” where main measures for better regulations and better business environment covering all relevant ministries can be found (https://enotnazbirkaukrepov.gov.si/).

Innovation Development

Collaborations & Partnerships

The project was developed in partnership with different stakeholders: public administration, academia, and SME. In the tool's design, we collaborated with experts from the University of Ljubljana. A University's spin-out company, Revelo Information Technologies, developed the tool. Use cases and documents were provided by the MPA’s internal users responsible for semantic interoperability, controlled vocabularies, and colleagues accountable for removing administrative burdens.

Users, Stakeholders & Beneficiaries

During the project's development different stakeholders were included: academia, SME and MPA users (for semantic interoperability, for controlled vocabularies and for removing administrative burdens). Users had substantially supported this project with their content requests and testing. Partners from UL FRI together with company Revelo organized workshops presenting AI for text processing based on their experiences. It is an open-source tool and will be upgraded with a user-friendly open web-based interface.

Innovation Reflections

Results, Outcomes & Impacts

Using the tool increases efficiency when browsing through different sources that are currently unrelated. We would also like to emphasise that the search is performed among credible sources that contain reliable and relevant information, which is of paramount importance in today's flood of information on the Internet.

The important results of the Semantic Analyzer project include:

- Ofering simple efficient tool supporting natural language processing to public administration users,

- Open-source software implementation of text analytics engine and user-friendly visual programming environment (http://orangedatamining.com, in Orange, download Text Mining add-on),

- Open-source code deposited on GitHub repository (https://github.com/biolab/orange3-text),

- A set of introductory educational videos (https://www.youtube.com/orangedatamining),

- Playlist on Semantic Analysis of Text,

- Examples of workflows and case studies (available at https://orangedatamining.com/workflows/Text-Mining).

Challenges and Failures

It was quite a challenge to bring the emerging technologies and their implications into the daily practice of the people who usually don’t work with them. Through some workshops showing them different possibilities of this tool, we inspired users to try to approach their work in a new, more efficient way. Another challenge we encountered in the project was in designing an intuitive and response interface for the users. The challenge has been solved through prototyping of the tool and engagement of the end users in the development cycle. In the future, we plan to improve the user interface for it to become more user-friendly.

Conditions for Success

Introduction of any AI-based tool requires strong engagement and enthusiasm from the end-user, support by leadership, and, in case of projects that use machine learning, seamless access to the data. For the further development and practical implications of the tool, it is important that the content and form of the texts and data collections which are used for searching, are complete, updated, and credible. An appropriate support should be encouraged and provided to collection custodians to equip them to align with the needs of a digital economy. Each collection needs a custodian and a procedure for maintaining the collection on a daily basis. In practice, we also have mostly linked collections, rather than just one collection used for specific tasks. Content testing of the tool should check the quality of the analysis, especially when it comes to documents describing different content areas, if the typical concepts of all areas are covered and that all related documents are searched.

Replication

Public administrations process many text documents, among which we must find those that speak about a certain topic and need to be reviewed to explain proposals or decisions. Free text in a classic, essay-style format is an example of unstructured data. Large sets of such essays are no longer capable of being quantitatively, let alone qualitatively, reviewed, understood, and compared by one individual. For the quality and efficiency of future work, it is essential to develop analytical tools that will help us to understand many texts, to sort them by content, and to carry out semantic searches for documents that are contextually related to the selected concepts. The tool we created is available freely, in open source, and has already been used in text mining by different groups worldwide. We believe that this tool has the potential to be used for other organisations from the public and private sector and for other interested parties (e. g. academia, students, or other citizens) in the future.

Lessons Learned

The use of emerging technologies supporting natural language processing in public administration is a new approach, especially in Slovenia. This project will lay the foundations and springboard for the development of further similar services. The development, integration and extension of text collections will increase the range of decision-making possibilities and the use of analyses of different texts for faster and better-quality work. Our experience working in partnership with different stakeholders – academia, SME and public administration gave us new insights. Different experiences and knowledge on technical and non-technical levels were combined with AI approaches to design the tool sot that it would have practical application for daily work in different fields. Our team consisted of diverse partners: academics, young IT engineers, lawyers, and other domain experts in public administration. During the development process all parties learned new knowledge from each other and received new insights.

Anything Else?

Public administrations store and generate large volumes of texts and documents. A semantic analyzer and similar emerging tools that can easily process large volumes of texts and documents from different sources are a step towards simplifying, optimizing, and automating the understanding of texts and the management of the processes that handle them. The development of tools is necessary to further develop analytical techniques in the field of text analysis. Tools such as the Semantic Analyzer support the development of the data economy and digitisation more broadly and aim to democratise artificial intelligence.

Supporting Videos

Status:

- Developing Proposals - turning ideas into business cases that can be assessed and acted on

- Implementation - making the innovation happen

- Evaluation - understanding whether the innovative initiative has delivered what was needed

- Diffusing Lessons - using what was learnt to inform other projects and understanding how the innovation can be applied in other ways

Date Published:

29 July 2023