In citizen participation projects, analysing contributions is often a huge challenge for administrations.

CitizenLab has developed machine-learning algorithms in order to help civil servants easily process thousands of citizen contributions and efficiently use these insights in decision-making.

The dashboards on our platform classify ideas, show what topics are emerging, summarise trends and cluster similar contributions by theme, demographic trait or location.

Innovation Summary

Innovation Overview

Digital participation platforms are important tools for increasing citizen engagement and improving government responsiveness. However, analysing the high volumes of citizen input collected on these platforms is extremely time-consuming and daunting for city officials; this technical difficulty can keep them from uncovering valuable learnings. Setting up a digital participation platform therefore isn’t enough: it’s also necessary to make data analysis more accessible so that civil servants can tap into collective intelligence and make better informed decisions.

The challenge of automation we have been faced with as a civic tech company is shared by the public sector at large. Deloitte recently released a report on AI-augmented governments, in which they conclude that natural language processing could help free up 1,2 billion hours of work and save up to $41,1 billion per year for governments worldwide. The UK government —recognised as the reference in terms of digital government— lined out in its 2020 strategy that a better understanding of citizen needs, based on data and evidence, is the absolute priority for next-gen governments. The three key components in their digital transformation are improved online citizen-facing services, improved efficiency to deliver citizen service across channels, and more effective digitally-enabled collaboration internally. BCG also reports that AI will improve the efficiency of democracy as governments start to ingest all available data to build a fine-grained representation of citizens and adapt public policy accordingly. These are all very positive directions; however, in reality, there is a huge gap with these objectives and the reality of under-resourced and under-staffed public administrations.

CitizenLab aims to bridge the knowledge gap that currently exists in the public sector. Most small to medium administrations understand the need for better work processes and large-scale data analysis, but don’t have the tools, means or in-house knowledge to build custom solutions. We aim to empower civil servants and provide them with machine-learning augmented processes that will help them analyse citizen input, make better decisions, and collaborate more efficiently internally.

Now for the technical details. Over the past year, CitizenLab has developed its own NLP (Natural Language Processing) techniques, with the capacity to automatically classify and analyse thousands of contributions collected on citizen participation platforms. The algorithms identify the main topics and group similar ideas together into clusters, which it is then possible to break down by demographic trait or geographic location. The artificial intelligence is able to process ideas regardless of the language, and works for multi-lingual platforms. The platform administrators have access to all of this information at a glance in intelligent, real-time dashboards. The topic modelling makes it easy to see what the citizen’s priorities are, and to make decisions accordingly. It helps public servants understand what citizens need: for instance, it happens that cities launch a consultation on environment, but what actually comes up in the comments are concerns about mobility and taxes. Being able to break this down by demographic groups and location also gives administrators a better overview of how priorities vary: it can be that a certain neighbourhood prioritises better roads, but its neighbour needs more traffic stops.

We believe that both governments and citizens benefit from this innovation. By automating the time-consuming task of data analysis, our platforms free up time for administrations to meaningfully engage with citizens. It gives them a better understanding of what citizens want and what they prioritise, which in turn leads to better-informed decisions. From the citizens’ perspective, this open and transparent process encourages trust, increases support of policy-decisions, and has a positive impact on the willingness to participate.

Our technology has been deployed to all our existing participation platforms, and is now actively being used by some of our clients. It has made a real impact on the way that they process insights, and has given them more confidence to use and share the findings of the platform. The time gain offered by the automated analysis and reporting has also allowed them to spend more time interacting with citizens and working to implement the ideas.

The next steps are to increase adoption of the feature and to make sure that all of our clients are making the best use of their automated dashboards. In the longer term, this technology could be applied to larger scale conversations such as social media, public forums or other places for online debate. The recent case of the Grand Débat in France has shown how important this technology is: without relevant and trustworthy data analysis, there can be no meaningful large-scale debate and citizen participation.

Innovation Description

What Makes Your Project Innovative?

Citizen participation platforms almost always stop at collecting contributions. They help governments gather input from citizens, but do nothing to help them analyse that input. The lack of support for that crucial step means that insights go undetected and that citizen participation isn’t having the impact it could have.

Our platform provides the analysis that’s needed. By using machine learning to analyse the citizen ideas we've collected, we provide a full end-to-end service for governments. The whole process is available within a single platform, making it easy to maintain an overview of the projects. This increases efficiency, decreases administrative costs linked to citizen participation, and leads to better decisions.

Finally, we have coupled our technical expertise with a deep understanding of citizen participation. Using our knowledge of the public sector, we have refined the algorithms to get to the information that cities need the most in order to make decisions.

Innovation Development

Collaborations & Partnerships

We worked with NLP consultants who helped us design a reliable product which we were then confident to implement and share with governments.

We have also been in contact with cities and civil servants to understand their requirements and make sure that the product we were building was aligned with their needs.

Finally, we relied on expertise from our team and public sector experts to scope out our impact and the benefits this initiative would bring to the public sector at large.

Users, Stakeholders & Beneficiaries

The CitizenLab platform has two types of beneficiaries: governments, and citizens.

In the short term, the benefits have mostly been felt by the administrations who use the platform. The civil servants have been able to gain precious time by easily accessing information and gathering insights.

In the longer term, citizens are the ones who see the positive impact of this innovation: with their input, governments can make better-informed decisions and are able to improve the relevant services.

Innovation Reflections

Results, Outcomes & Impacts

Since launching this feature in late 2018, we have seen cases where the automated analysis has made a true impact on the local administration and its relationship with citizens.

The city of Kortrijk uses the intelligent dashboards to easily process contributions by the 1,300 users of their platform. They have clustered the ideas into main topics to see what came out of conversations. The results of the analysis were also shared with the citizens, making this a real dialogue rather than a top-down initiative.

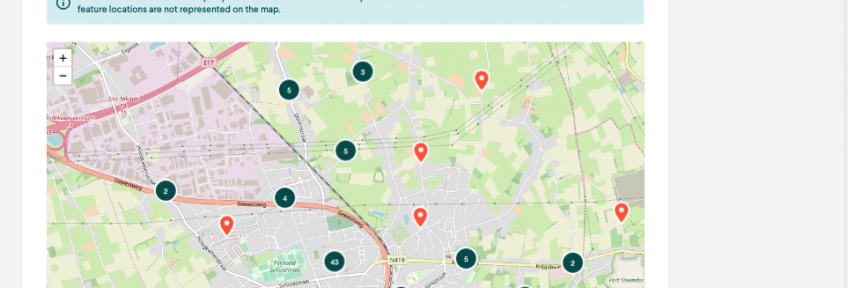

The city of Temse consulted its citizens on mobility, and located ideas on a map of the city. This helped the administration see where the main issues where, and understand where funds needed to be allocated.

CitizenLab is now helping the YouthForClimate organisation to analyse the 4,000 ideas posted on their participation platform and turn these into 16 policy recommendations. The topic modelling on a large scale has helped identify the most important themes.

Challenges and Failures

We face two main challenges: classification algorithms and human adoption.

We work with a classification algorithm that clusters, categorises and summarises input from citizens. It needs to be easily scalable, but also needs to adapt to different administrations' workflows since taxonomies used might vary by country or even by region. Our classification algorithms also need to support multiple languages on the same platform and make semantic links between languages, which adds an extra layer of technical complexity.

On the human side, we need to maintain clear workflows and make sure the technology is responding to real user needs in order to maximise adoption by the administration. We have learnt that the product shouldn’t be pushed without guiding the users through its benefits. Also, the human-machine interaction is crucial: how does one interprets and ‘trust’ the output generated by the machine? And what role can this output play in one’s workflow?

Conditions for Success

The first condition for the success of this initiative is the quality of the input. The technology relies on getting clear and detailed contributions from citizens, which means we need to make sure that citizens are guided to the right types of contributions.

The second point is user adoption: in order to be adopted, the tool has to be easy to use and trusted. Civil servants need to understand its benefits and feel that they can rely on the results. We can aim towards this by working to improve the user experience, explaining the methodology involved and making sure it integrates with their existing tools and workflows.

Finally, wider regulatory evolutions can have a decisive impact on the product’s success. If citizen participation is pushed on a state or regional level, this will encourage cities to invest in our platform. From there comes a virtuous circle: the more cities use the products, the more the algorithms can improve and the better the product gets.

Replication

As the appetite for citizen participation grows, so does the need for automated data analysis. Although citizen participation platforms are being set up throughout the world, very few have integrated analysis capacities. As seen with the difficult analysis of the Grand Débat contributions in France, this is a wide-spread issue preventing citizen contributions from truly influencing decision-making. Our technology could be replicated on any other platform.

There is also a true benefit to using our product: because we already work with multiple cities, our algorithms have been trained on multiple data-sets and they’re more efficient than than a one-off, local solution could be.

In the longer term, the technology we’re developing to analyse multi-lingual contributions could also be used to analyse wide-scale conversations on social media or public forums. It could help governments easily understand what citizens are talking about on a very large scale and adapt the relevant policies.

Lessons Learned

Throughout this process, we have had the occasion to learn a lot both about the technology, and about the human factor behind the technology.

Regarding the technology, natural language processing and machine learning are evolving very, very quickly. An off the shelf solution can often be of great help, but it will only get you so far. We made the decision early on to invest in our own technology, and to build something we had complete control over. This has allowed us to be more reactive to change, to adapt to different markets, but also to be open with cities about how the technology worked and detail exactly how it had been built. Having an in-house expert also means we have the freedom to keep experimenting and improving.

We have found that it is worth investing both time and money in initial research. The decisions you make early on when building the algorithm will have a decisive influence over the way it develops later on. This goes for languages, but also for training models. It is easier to migrate to some languages than others, so make sure you pick the right one when you start. The thresholds you set early on regarding topic similarity will also shape how the algorithms evolve, and how accurate they are.

We have learnt that no matter how good the technology is, what truly matters are the humans behind it. In order for the product to work, civil servants have to show an interest and they have to trust that it will provide reliable results. Civil servants care about the results the tool can bring rather than the shiny tech it is built on, so that’s what we have centred our communications on. We have also put a lot of work in making the platform as easy to navigate and as results-oriented as possible.

When developing a public-sector oriented tool, make sure there is a specific, identified need. Time and resources are scarce in administrations, and civil servants will only invest both in a tool if it has proven value. Don’t forget to user-test your tool regularly as you’re building it – this will help you stay closely aligned with your end users’ need. Finally, it’s possible that there is a need but no awareness of that need or no recognition for your solution at first. Be prepared to educate users and to work to evangelise the sector.

There is also an important human factor when contributing to the platform. Our machine-learning processes rely on clear and detailed input, but that’s not how most contributors write. We’re therefore doing constant testing and tweaking on the platform to guide users towards the input format that we need. Just like the civil servants, citizens have to be given a reason to use the platform. We’ve been helping cities highlight the benefits it can bring and develop a clear message around participation.

Finally, it has been extremely interesting to see the sector develop alongside the tool. We had already witnessed the growing demand for citizen participation platforms, and we’re now seeing more and more interest for automated data-analysis. Civil servants have also grown more aware of issues around data-protection, and it’s a good sign that we are being more and more challenged around these questions in preliminary meetings. We would therefore recommend to anyone launching a similar product to make sure they are able to hold themselves to the highest ethical and security standards.

Open Government Tags

accountability co-creation digital government engagement evaluation OGP open government participation social policy transparencyDate Published:

12 April 2018